Algorithms Now Make War Decisions: The Controversy Over AI Targeting

- Mar 15

- 3 min read

Artificial intelligence is rapidly changing the nature of modern warfare. Military forces around the world are increasingly using AI systems to analyse intelligence, track potential threats, and identify targets with unprecedented speed. Supporters argue that these technologies can improve precision and help commanders make better informed decisions. Yet a recent incident during the conflict involving the United States and Iran has sparked serious public debate about the risks of relying on artificial intelligence in life and death situations.

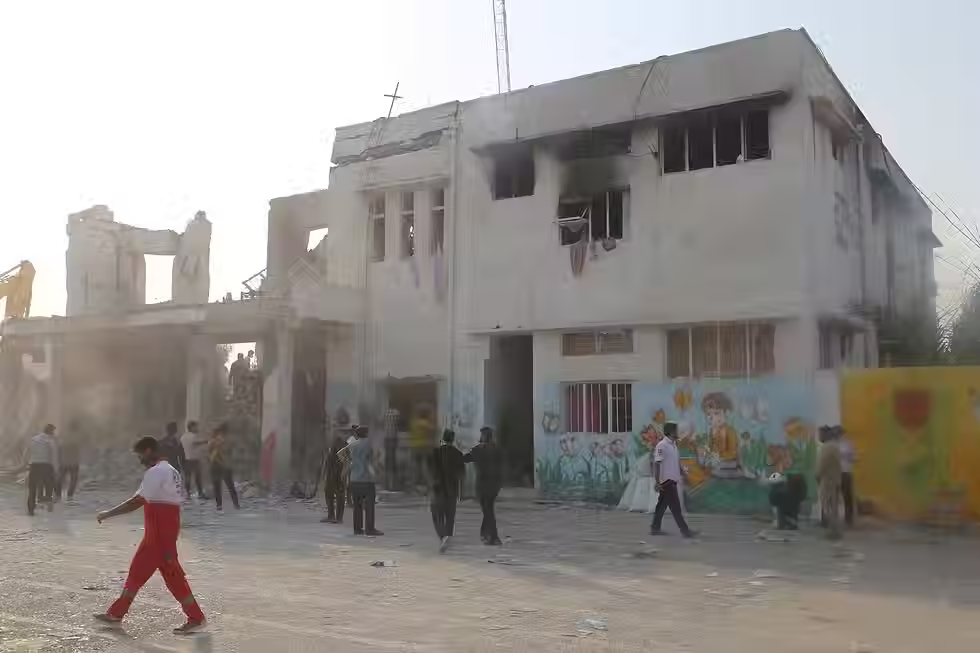

Reports from The Washington Post revealed that a controversial strike may have hit an elementary school that had reportedly been flagged as a potential military site by targeting systems used during the conflict. Investigators believe the location may have been mistakenly identified as a legitimate target through automated analysis of intelligence data. The strike has drawn widespread attention because it illustrates how errors in complex AI assisted systems can have devastating consequences when civilians are present.

The story has triggered strong emotional reactions around the world. For many people, the idea that an algorithm could influence decisions that affect human lives is deeply unsettling. Warfare has always involved difficult moral choices, but the involvement of advanced technology introduces a new layer of uncertainty. When a machine contributes to identifying a target, questions quickly arise about who bears responsibility if something goes wrong.

At the same time, some military analysts argue that artificial intelligence can actually reduce the risk of mistakes in combat. AI systems are capable of processing enormous volumes of information from satellites, surveillance drones, and other sensors far more quickly than human analysts. In theory, this ability allows commanders to build a clearer picture of complex environments and avoid errors that might occur when humans must analyse data under intense time pressure.

Despite these potential advantages, critics warn that the speed of AI assisted decision making may also create new dangers. Modern conflicts often move quickly, and automated tools can compress hours of intelligence analysis into minutes. When decisions are made under such conditions, even small errors in data or algorithms may lead to tragic outcomes. In the case of the suspected school strike, critics argue that reliance on automated systems could have contributed to a misinterpretation of the site’s purpose.

The controversy has therefore intensified a broader debate about the reliability and accountability of artificial intelligence in military operations. If AI systems play a role in identifying targets, determining responsibility becomes far more complicated. Military commanders remain officially responsible for final decisions, yet the analytical process increasingly involves complex algorithms that few people fully understand. This raises difficult ethical questions about transparency and oversight in technologically advanced warfare.

The incident has also reignited discussions about whether stronger international rules are needed to regulate the use of artificial intelligence in combat. Some experts believe that governments should establish clearer standards for how AI systems are developed and deployed in military contexts. Others argue that limiting access to such technologies could place certain countries at a strategic disadvantage, particularly as global competition for technological leadership intensifies.

For society, the debate surrounding AI targeting reflects a deeper tension between technological progress and ethical responsibility. Artificial intelligence has the potential to enhance human capabilities and provide valuable insights in complex situations. At the same time, the stakes are dramatically higher when these tools are used in environments where human lives are directly at risk.

Ultimately, the controversy surrounding the suspected strike highlights the profound challenges that come with integrating artificial intelligence into warfare. The technology promises greater efficiency and analytical power, yet it also raises questions about trust, accountability, and moral judgment. As governments continue to invest in AI driven defence systems, societies will need to decide how much responsibility they are willing to place in the hands of machines. The choices made in the coming years may shape not only the future of warfare but also the ethical boundaries of artificial intelligence itself.

Ai can now actually physically harm us oh no